Coulomb Matrix¶

Coulomb Matrix (CM) [1] is a simple global descriptor which mimics the electrostatic interaction between nuclei.

Coulomb matrix is calculated with the equation below.

The diagonal elements can be seen as the interaction of an atom with itself and are essentially a polynomial fit of the atomic energies to the nuclear charge \(Z_i\). The off-diagonal elements represent the Coulomb repulsion between nuclei \(i\) and \(j\).

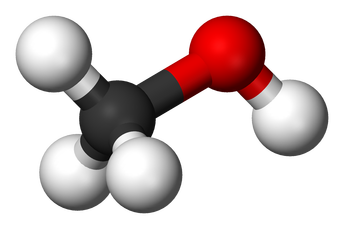

Let’s have a look at the CM for methanol:

In the matrix above the first row corresponds to carbon (C) in methanol interacting with all the other atoms (columns 2-5) and itself (column 1). Likewise, the first column displays the same numbers, since the matrix is symmetric. Furthermore, the second row (column) corresponds to oxygen and the remaining rows (columns) correspond to hydrogen (H). Can you determine which one is which?

Since the Coulomb Matrix was published in 2012 more sophisticated descriptors have been developed. However, CM still does a reasonably good job when comparing molecules with each other. Apart from that, it can be understood intuitively and is a good introduction to descriptors.

Setup¶

Instantiating the object that is used to create Coulomb matrices can be done as follows:

The constructor takes the following parameters:

-

CoulombMatrix.__init__(n_atoms_max, permutation='sorted_l2', sigma=None, seed=None, flatten=True, sparse=False)¶ - Parameters

n_atoms_max (int) – The maximum nuber of atoms that any of the samples can have. This controls how much zeros need to be padded to the final result.

permutation (string) –

Defines the method for handling permutational invariance. Can be one of the following:

none: The matrix is returned in the order defined by the Atoms.

sorted_l2: The rows and columns are sorted by the L2 norm.

eigenspectrum: Only the eigenvalues are returned sorted by their absolute value in descending order.

random: The rows and columns are sorted by their L2 norm after applying Gaussian noise to the norms. The standard deviation of the noise is determined by the sigma-parameter.

sigma (float) – Provide only when using the random-permutation option. Standard deviation of the gaussian distributed noise determining how much the rows and columns of the randomly sorted matrix are scrambled.

seed (int) – Provide only when using the random-permutation option. A seed to use for drawing samples from a normal distribution.

flatten (bool) – Whether the output of create() should be flattened to a 1D array.

sparse (bool) – Whether the output should be a sparse matrix or a dense numpy array.

Creation¶

After CM has been set up, it may be used on atomic structures with the

create()-method.

The call syntax for the create-function is as follows:

-

CoulombMatrix.create(system, n_jobs=1, verbose=False)[source]¶ Return the Coulomb matrix for the given systems.

- Parameters

system (

ase.Atomsor list ofase.Atoms) – One or many atomic structures.n_jobs (int) – Number of parallel jobs to instantiate. Parallellizes the calculation across samples. Defaults to serial calculation with n_jobs=1.

verbose (bool) – Controls whether to print the progress of each job into to the console.

- Returns

Coulomb matrix for the given systems. The return type depends on the ‘sparse’ and ‘flatten’-attributes. For flattened output a single numpy array or sparse scipy.csr_matrix is returned. The first dimension is determined by the amount of systems.

- Return type

np.ndarray | scipy.sparse.csr_matrix

Note that if you specify in n_atoms_max a lower number than atoms in your

structure it will cause an error. The output will in this case be a flattened

matrix, specifically a numpy array with size #atoms * #atoms. The number of

features may be requested beforehand with the

get_number_of_features()-method.

In the case of multiple samples, the creation can also be parallellized by using the n_jobs-parameter. This splits the list of structures into equally sized parts and spaws a separate process to handle each part.

Examples¶

The following examples demonstrate usage of the descriptor. These examples are also available in dscribe/examples/coulombmatrix.py.

No flattening¶

You can control whether the returned array is two-dimensional or one-dimensional by using the flatten-parameter

No Sorting¶

By default, CM is sorted by the L2-norm (more on that later). In order to get the unsorted CM it is necessary to specify the keyword permutation = “none” when setting it up.

Zero-padding¶

The number of features in CM depends on the size of the system. Since most machine learning methods require size-consistent inputs it is convenient to define the maximum number of atoms n_atoms_max in a dataset. If the structure has fewer atoms, the rest of the CM will be zero-padded. One can imagine non-interacting ghost atoms as place-holders to ensure the same number of atoms in every system.

Not meant for periodic systems¶

The CM was not designed for periodic systems. If you do add periodic boundary conditions, you will see that it does not change the elements.

Instead, the Sine Matrix and the Ewald Matrix <ewald_matrix> have been designed as periodic counterparts to the CM.

Invariance¶

A good descriptor should be invariant with respect to translation, rotation and permutation. No matter how you translate or rotate it or change the indexing of the atoms (not the atom types!), it will still be the same molecule! The following lines confirm that this is true for CM.

Options for permutation¶

The following snippet introduces the different options for handling permutation invariance. See [2] for more information on these methods.

sorted_l2 (default): Sorts rows and columns by their L2-norm.

none: keeps the order of the rows and columns as the atoms are read from the ase object.

random: The term random can be misleading at first sight because it does not scramble the rows and columns completely randomly. The rows and columns are sorted by their L2-norm after applying Gaussian noise to the norms. The standard deviation of the noise is determined by the additionally required sigma-parameter. sigma determines the standard deviation of the gaussian distributed noise determining how much the rows and columns of the randomly sorted matrix are scrambled. Feel free to try different sigma values to see the effect on the ordering. Optionally, you can specify a random seed. sigma and seed are ignored if permutation is other than “random”. This option is useful if you want to augment your dataset, similar to augmented image datasets where each image gets mirrored, rotated, cropped or otherwise transformed. You would need to create several instances of the randomly sorted CM in a loop. The advantage of augmenting data like this over using completely random CM lies in the lower number of “likely permutations”. Rows and columns of the CM are allowed to flip just so that the feature space (all possible CM) is smooth but also compact.

eigenspectrum: Only the eigenvalues of the matrix are returned sorted by their absolute value in descending order. On one hand, it is a more compact descriptor, but on the other hand, it potentially loses information encoded in the CM interactions.

- 1

Matthias Rupp, Alexandre Tkatchenko, Klaus-Robert Müller, and O. Anatole von Lilienfeld. Fast and accurate modeling of molecular atomization energies with machine learning. Phys. Rev. Lett., 108:058301, Jan 2012. doi:10.1103/PhysRevLett.108.058301.

- 2

Grégoire Montavon, Katja Hansen, Siamac Fazli, Matthias Rupp, Franziska Biegler, Andreas Ziehe, Alexandre Tkatchenko, Anatole V. Lilienfeld, and Klaus-Robert Müller. Learning invariant representations of molecules for atomization energy prediction. In F. Pereira, C. J. C. Burges, L. Bottou, and K. Q. Weinberger, editors, Advances in Neural Information Processing Systems 25, pages 440–448. Curran Associates, Inc., 2012. URL: http://papers.nips.cc/paper/4830-learning-invariant-representations-of-molecules-for-atomization-energy-prediction.pdf.